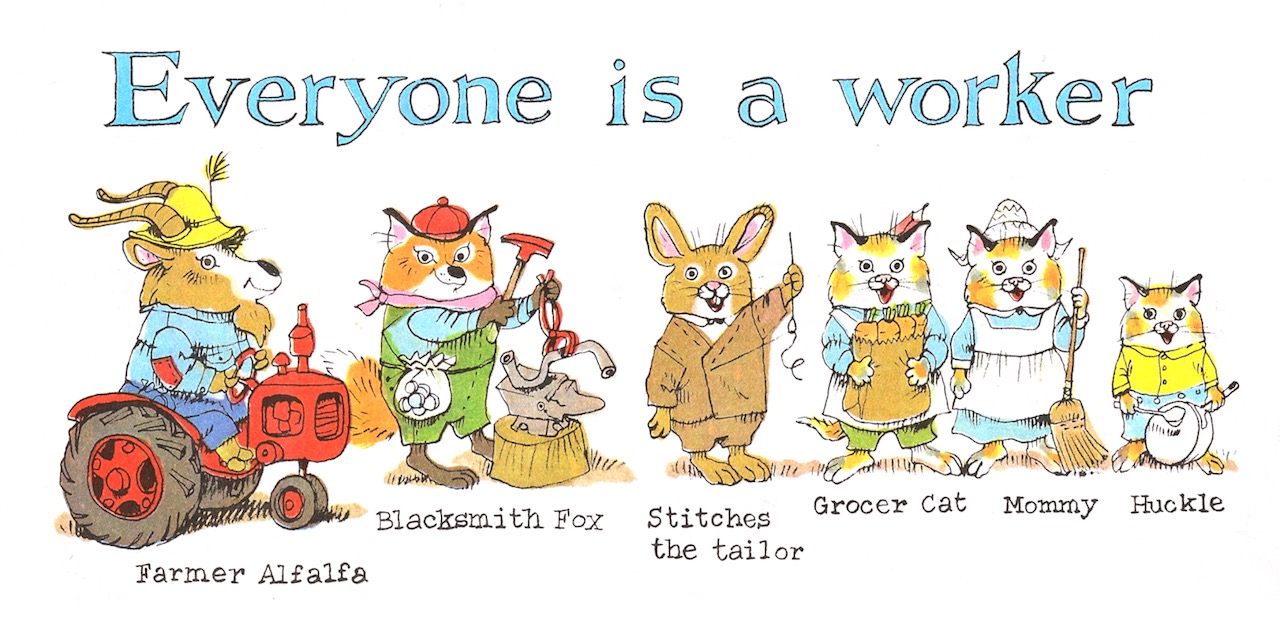

What Do People Do All Day?

One of Sam’s favourite books at bedtime is Richard Scarry’s ‘What Do People Do All Day?’. I was going to write a post all about it, because it’s kind of brilliant (explaining economic and industrial processes for the under fives! lovely drawings!) and kind of terrible (gender roles! a society seen solely through the eyes of capitalism!).

So I started writing, but then I found this great post deconstructing it better than I could:

Which rather begs my usual question: so why is it that we no longer make these kinds of books? Why is it that we have shifted our focus to how things work or how people used to work as opposed to how people work now? Is it that work is too elusive, that new economy jobs are harder to draw? Can we not deal with the fact that Alfalfa has become a derivatives trader? But work of course is far from invisible. It’s not just that we do so many of the occupations lovingly drawn by Scarry, and in more or less the same way. It’s also that people still work in manufacturing, only mostly elsewhere. We could teach our children about that, just like we teach them that everybody poops.

(Obviously, someone has done a ‘funny’ 21st century version, but that doesn’t count.)

So I read it, and I looked at the news, and it became pretty clear to me while I'd like to see more of this sort of book, the kids will be just fine. But we could do with a bit more Richard Scarry, and a bit more How Things Work, and a bit more Secret Life of Machines for everyone else.